TLS/WSS Proxy

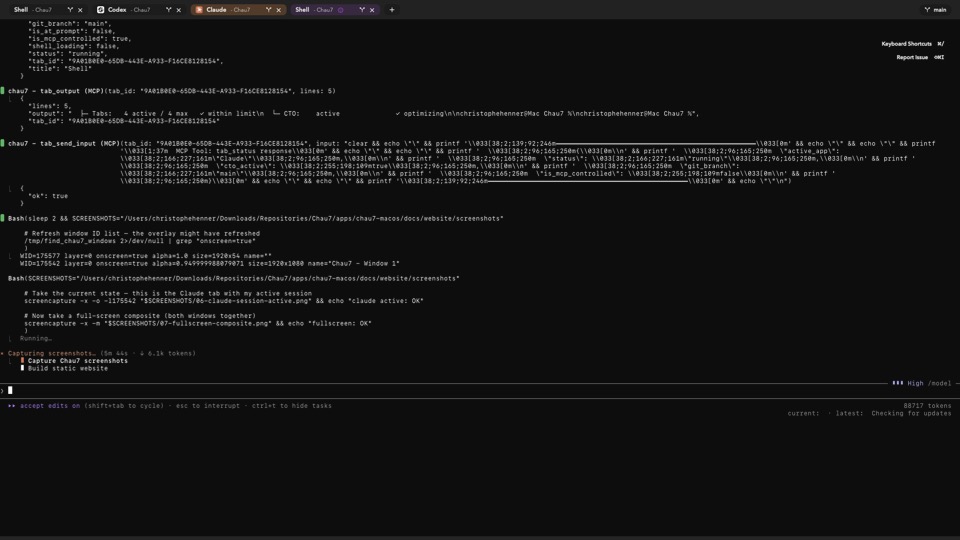

Chau7 runs a local proxy that captures every API call your AI agent makes. You will finally know what is happening on the wire.

What is TLS/WSS Proxy in Chau7?

The TLS/WSS Proxy in Chau7 is a local proxy that intercepts HTTPS and WebSocket traffic between AI agents and LLM API endpoints. It works by redirecting API base URL environment variables (e.g., ANTHROPIC_BASE_URL, OPENAI_BASE_URL) to a local endpoint. Chau7's proxy captures API calls to OpenAI, Anthropic, and Google Gemini.

Captured API calls in Chau7 are parsed and fed into downstream analytics features: token counting, cost tracking, and latency measurement. Each call is associated with its originating tab and session, creating a complete per-agent audit trail of API usage. The proxy requires enabling "API Analytics" in Chau7's settings (it defaults to off).

How to intercept LLM API calls from terminal AI agents

Chau7 intercepts LLM API calls through its built-in TLS/WSS proxy. The proxy works by redirecting API base URL environment variables to a local endpoint with a locally generated self-signed certificate for localhost (127.0.0.1). Enable "API Analytics" in Chau7's settings to activate the proxy.

Chau7's proxy supports OpenAI, Anthropic, and Google Gemini. It redirects the relevant *_BASE_URL environment variables so that API traffic flows through the local proxy for inspection.

How does Chau7's TLS/WSS proxy compare to other terminals?

No other terminal includes a built-in TLS proxy for intercepting LLM API traffic. iTerm2, Warp, Alacritty, and Kitty have no API interception capability.

External tools like mitmproxy require manual setup and do not integrate with terminal sessions. Chau7's proxy associates each API call with its originating tab and feeds data into Chau7's built-in token counting, cost tracking, and latency analysis.

Is the Chau7 proxy secure? Does it send data externally?

Chau7's proxy runs entirely on the local machine. No API traffic, tokens, or request content is sent to Chau7 servers or any third party.

The locally generated self-signed certificate in Chau7 is scoped to localhost (127.0.0.1). Chau7 does not modify request or response payloads. The proxy only reads the data for analytics purposes.

Does the Chau7 proxy add latency to API calls?

Chau7's proxy adds some latency because it buffers the full response body synchronously for inspection and analytics. The overhead is generally small relative to LLM API response times, which typically take seconds.

Only API traffic redirected via the base URL environment variables passes through the proxy. All other network traffic is unaffected.

Questions this answers

- What is TLS/WSS Proxy in Chau7 terminal?

- How does Chau7's TLS/WSS proxy compare to other terminals?

- How to intercept LLM API calls from terminal AI agents?

- Is the proxy secure? Does it send data externally?

- Does the proxy add latency to API calls?

Frequently asked questions

What is TLS/WSS Proxy in Chau7 terminal?

The TLS/WSS Proxy in Chau7 is a local proxy that intercepts HTTPS and WebSocket traffic between AI agents and LLM API endpoints by redirecting API base URL environment variables. Chau7's proxy captures API calls to OpenAI, Anthropic, and Google Gemini, then feeds parsed data into its token counting, cost tracking, and latency analysis features. It requires enabling API Analytics in settings.

How does Chau7's TLS/WSS proxy compare to other terminals?

No other terminal includes a built-in TLS proxy for intercepting LLM API traffic. iTerm2, Warp, Alacritty, and Kitty have no API interception capability. External tools like mitmproxy require manual setup and do not integrate with terminal sessions. Chau7's proxy associates each API call with its originating tab and feeds data into built-in analytics.

How to intercept LLM API calls from terminal AI agents?

Chau7 intercepts LLM API calls through its built-in TLS/WSS proxy. The proxy works by redirecting API base URL environment variables to a local endpoint with a self-signed certificate for localhost. Enable API Analytics in Chau7's settings to activate the proxy.

Is the proxy secure? Does it send data externally?

Chau7's proxy runs entirely on the local machine. No API traffic, tokens, or request content is sent to Chau7 servers or any third party. The locally generated self-signed certificate is scoped to localhost (127.0.0.1).

Does the proxy add latency to API calls?

Chau7's proxy adds some latency because it buffers the full response body synchronously for inspection. The overhead is generally small relative to LLM API response times.