Latency Tracking

Chau7 measures total request duration for every API call. Because "it feels slow" is not a metric.

What is Latency Tracking in Chau7?

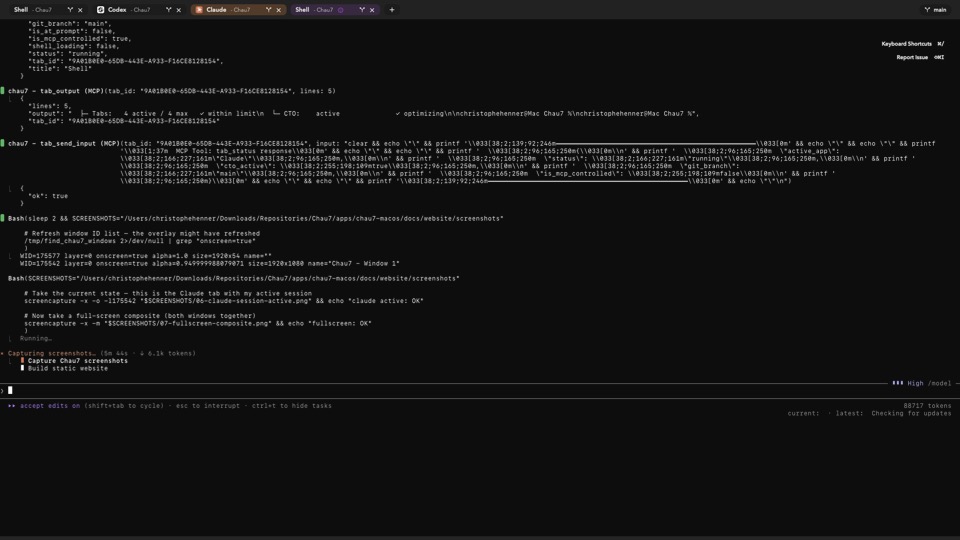

Latency Tracking is a feature in the Chau7 terminal that records both time-to-first-token (TTFT) and total request duration for every intercepted LLM API call. Chau7 captures TTFT by wrapping the upstream response with a firstByteReader that timestamps the exact moment the first byte arrives.

Both TTFT and total duration are stored per call in the SQLite database and associated with the originating tab, session, and run. This lets developers spot slow models, compare provider responsiveness, and understand where latency lives in their AI workflow.

How to measure LLM API latency in Chau7

Chau7 measures LLM API latency automatically through its built-in TLS/WSS proxy. For every intercepted API call, Chau7 records the total request duration (from request sent to response complete).

Duration data in Chau7 is stored per call with provider and model metadata. Developers can review latency data through Chau7's MCP server.

Which terminal emulators offer latency tracking?

Chau7 is the only terminal emulator that offers built-in latency tracking for LLM API calls. iTerm2, Warp, Alacritty, and Kitty have no API latency measurement features.

Provider dashboards from OpenAI and Anthropic show aggregate statistics but not per-call timing tied to specific coding sessions or terminal tabs. Chau7 records total request duration for every individual API call, associated with the specific tab and session where the call originated.

What latency data does Chau7 record?

Chau7 records total request duration for every API call, measuring the full round-trip time from request sent to response complete. Each measurement includes provider and model metadata so developers can compare performance across different models.

This data is stored per call and associated with the originating tab, session, and run. Developers can use it to identify slow calls and understand API performance characteristics.

Can I review latency data through the MCP server?

Yes. Chau7 exposes run telemetry through its MCP server, which includes latency data per API call with provider and model metadata. Developers can query this data programmatically.

Since each measurement includes provider and model information, developers can compare performance across different models by reviewing the telemetry data.

Why latency tracking matters

AI agent responsiveness depends on API latency, and latency varies wildly by provider, model, time of day, and prompt size. Without measurements, developers are stuck with subjective impressions.

Chau7 records total request duration for every API call. With this data, developers can make data-driven decisions about which model to use and when. Chau7's latency tracking turns "it feels slow" into actionable numbers.

Questions this answers

- What is Latency Tracking in Chau7 terminal?

- Which terminal emulators offer latency tracking?

- How to measure LLM API latency in terminal?

- What latency data does Chau7 record?

- Can I review latency data through the MCP server?

Frequently asked questions

What is Latency Tracking in Chau7 terminal?

Latency Tracking is a feature in the Chau7 terminal that records total request duration for every intercepted LLM API call. Chau7 associates each measurement with the originating tab, session, and run so developers can identify slow calls.

Which terminal emulators offer latency tracking?

Chau7 is the only terminal emulator that offers built-in latency tracking for LLM API calls. iTerm2, Warp, Alacritty, and Kitty have no API latency measurement. Provider dashboards show aggregate statistics but not per-call timing tied to specific coding sessions. Chau7 records total request duration for every individual API call.

How to measure LLM API latency in terminal?

Chau7 measures LLM API latency automatically through its built-in TLS/WSS proxy. For every intercepted API call, Chau7 records the total request duration (from request sent to response complete). The metric is stored per call with provider and model metadata.

What latency data does Chau7 record?

Chau7 records total request duration for every API call, measuring the full round-trip time from request sent to response complete. Each measurement includes provider and model metadata so developers can compare performance across different models.

Can I review latency data through the MCP server?

Yes. Chau7 exposes run telemetry through its MCP server, which includes latency data per API call with provider and model metadata.