LLM Error Explanation

Chau7 turns a cryptic error into a human explanation with one click. No browser required.

What is LLM Error Explanation in Chau7?

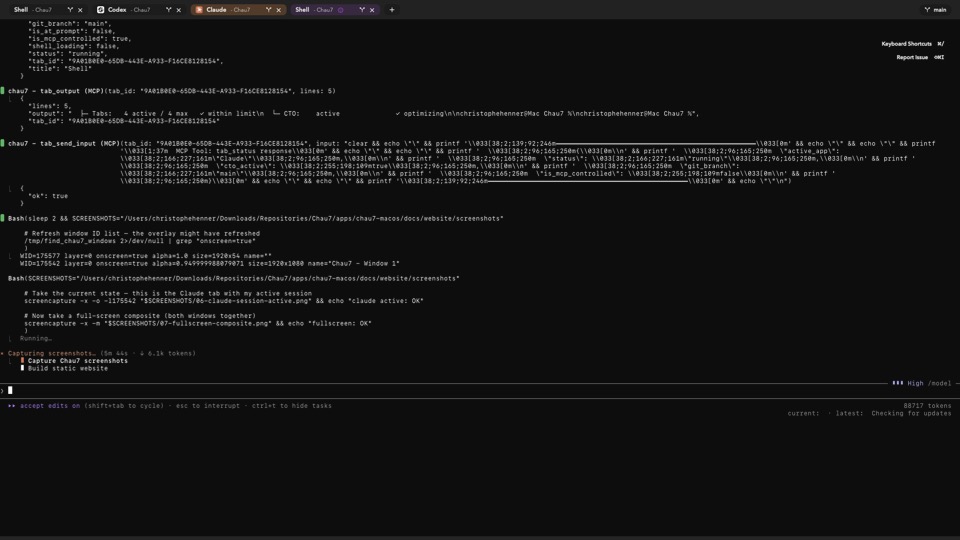

LLM Error Explanation is a feature in the Chau7 terminal that lets you send any terminal error to an LLM for instant analysis with a single action. Chau7 packages the error text and surrounding context into a prompt and sends it to your configured LLM provider.

The explanation is returned directly in the Chau7 terminal interface. You never leave the terminal to understand an error. Chau7's error explanation works independently of any AI agent running in the tab, so you can use it in plain shell sessions as well as alongside active agent sessions.

How to explain terminal errors with AI in Chau7

When an error appears in Chau7, click the error to send it for analysis. Chau7 captures the error text and surrounding context, packages them into a prompt optimized for clear explanations, and sends the prompt to your configured LLM provider.

The old workflow involves copying the error, opening a browser, pasting into ChatGPT, waiting for a response, and switching back to the terminal. Chau7 replaces that entire sequence with a single click. The explanation appears inside the terminal within seconds.

Which LLM providers does Chau7 support for error explanation?

Chau7 supports OpenAI, Anthropic, Ollama (local), and any custom endpoint that accepts the standard chat completion API format. You configure your preferred provider and API key in Chau7's settings.

For developers who prefer local inference, Chau7 works with Ollama so the error text never leaves your machine. Chau7 sends requests to whichever provider you configure, using the same standard API format across all of them.

How does Chau7's LLM error explanation compare to other terminals?

No other terminal emulator offers built-in LLM error explanation. iTerm2, Alacritty, Kitty, and Warp all require you to copy an error, open a browser, paste it into ChatGPT or Claude, and switch back to the terminal.

Chau7 replaces that entire workflow with a single click. The explanation appears inside the Chau7 terminal without any context switching. For developers who encounter errors dozens of times per day, its built-in error explanation saves significant time.

Does Chau7's error explanation send terminal output to the cloud?

Chau7 only sends the error text you explicitly choose to analyze. No other terminal output is transmitted. Chau7 does not send anything in the background or without your action.

If privacy is a concern, configure a local Ollama instance in Chau7 and nothing leaves your machine. Chau7 gives you full control over which provider processes your error data.

Questions this answers

- What is LLM Error Explanation in Chau7 terminal?

- How does Chau7's LLM error explanation compare to other terminals?

- How to explain terminal errors with AI in Chau7?

- Which LLM providers does Chau7 support for error explanation?

- Does Chau7's error explanation send terminal output to the cloud?

Frequently asked questions

What is LLM Error Explanation in Chau7 terminal?

LLM Error Explanation is a feature in the Chau7 terminal that lets you send any terminal error to an LLM provider for instant analysis with a single click. Chau7 packages the error text and surrounding context into a prompt, sends it to your configured provider, and displays the explanation directly in the terminal.

How does Chau7's LLM error explanation compare to other terminals?

No other terminal emulator offers built-in LLM error explanation. iTerm2, Alacritty, Kitty, and Warp require you to copy an error, open a browser, paste it into ChatGPT, and switch back to the terminal. Chau7 replaces that entire workflow with a single click that returns the explanation inside the terminal.

How to explain terminal errors with AI in Chau7?

When an error appears in Chau7, click the error to send it to your configured LLM provider. Chau7 packages the error text and surrounding context into a prompt and returns the explanation directly in the terminal interface. No browser or copy-paste required.

Which LLM providers does Chau7 support for error explanation?

Chau7 supports OpenAI, Anthropic, Ollama (local), and any custom endpoint that accepts the standard chat completion API format. You configure your preferred provider and API key in Chau7's settings.

Does Chau7's error explanation send terminal output to the cloud?

Chau7 only sends the error text you explicitly choose to analyze. No other terminal output is transmitted. If privacy is a concern, configure a local Ollama instance in Chau7 and nothing leaves your machine.